David Leeftink

Radboud University

Nijmegen, the Netherlands

david.leeftink (at) ru.nl.

I am a PhD candidate at the Donders Institute for Brain, Cognition and Behaviour (Radboud University), supervised by Dr. Max Hinne and Prof. Marcel van Gerven.

My research focuses on reinforcement learning and control for dynamical systems under uncertainty. I approach deep RL through the lens of optimal control theory, aiming to understand and improve decision-making under uncertainty in dynamical systems. My goal is to develop methods that are robust and deployable in real-world systems.

A central question in my work is: how can we make reliable decisions under model uncertainty? To address this, my work centers on three directions:

-

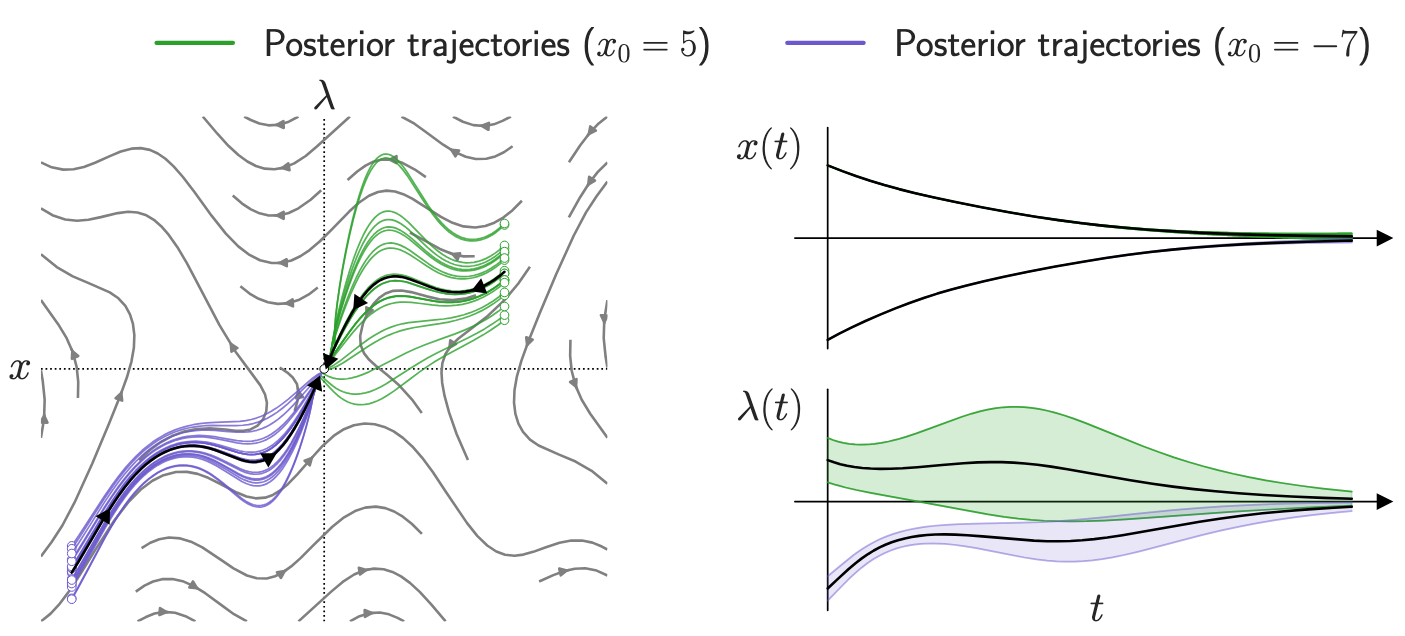

Probabilistic control under uncertainty: optimal control methods for systems with unknown or partially known dynamics.

-

Data-efficient optimization of physical systems: Bayesian optimization for high-cost and real-world settings, such as semiconductor manufacturing and neural implants.

-

Deep Reinforcement Learning: model-based and model-free reinforcement learning from a dynamical systems perspective, aiming to improve data-efficiency and interpretability.

Ultimately, my goal is to root modern reinforcement learning algorithms in the notions of robustness, safety, and stability.

news

| Mar 15, 2026 | Paper accepted: Our recent work on Bayesian Optimization for Semiconductor Manufacturing has been accepted for IFAC’s Control Engineering Practice. |

|---|---|

| Dec 09, 2025 | Upcoming Talk: I presented our recent work on mean hamiltonian minimization in a lightning round talk at the Workshop on Stochastic Planning & Control of Dynamical Systems at CDC in Rio de Janeiro, on December 9th 2025. |

| Jul 17, 2025 | Paper Acceptance: Our work on Mean Hamiltonian Minimization has been accepted at IEEE Conference for Decision and Control (CDC) 2025. |

| Jun 12, 2025 | Upcoming Talk: I gave a contributed talk at the Workshop on Theory of Control and Reinforcement Learning at CWI, Amsterdam on the 19th of June 2025. |